ML+X Nexus: Crowdsourced ML and AI Resources

More than just a repository of content from across the internet, ML+X Nexus is a curated, community-driven platform that captures the collective knowledge and experiences of ML+X (and the broader UW campus). It serves as a growing knowledge hub—preserving past discussions, highlighting widely used tools and datasets, and evolving alongside our community’s needs. Nexus is organized into four sections, accessible from the left sidebar:

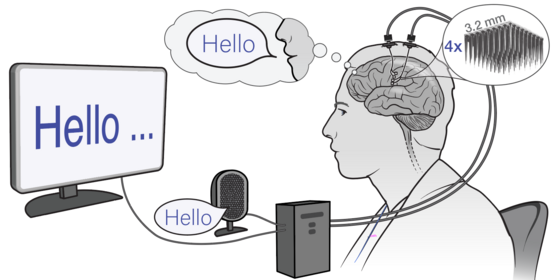

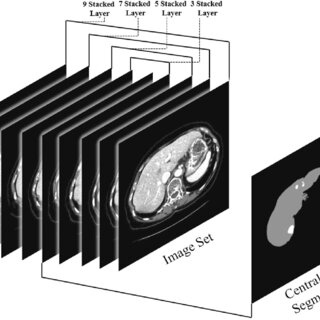

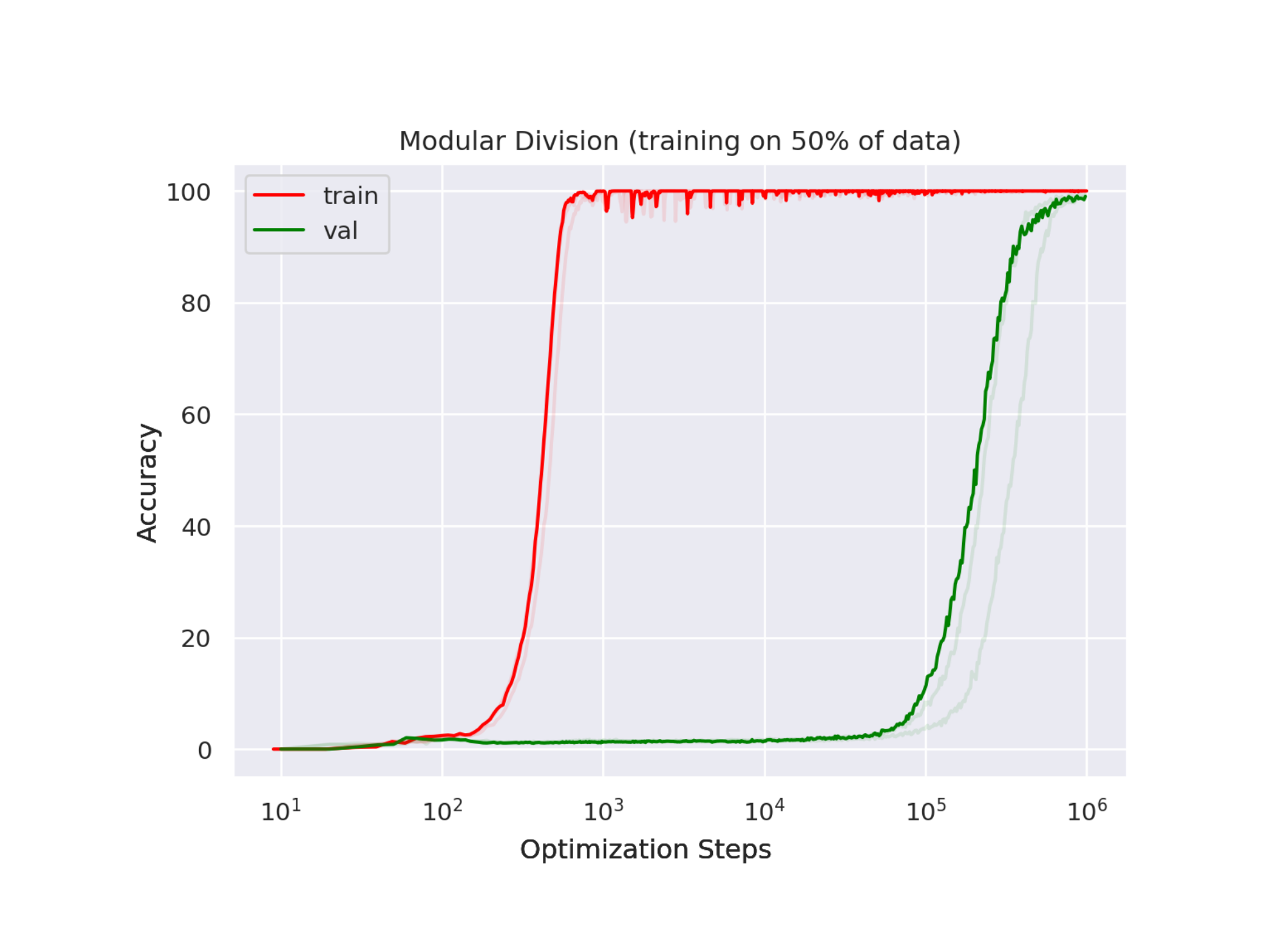

- 🧠 Learn — Build your skills with workshops, books, videos, guides, and notebooks

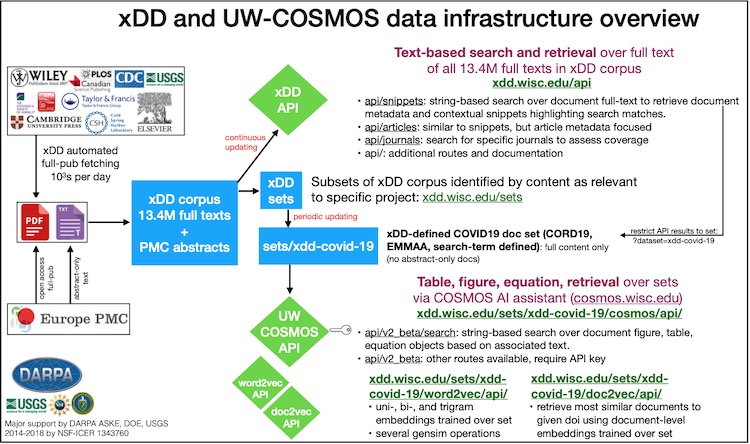

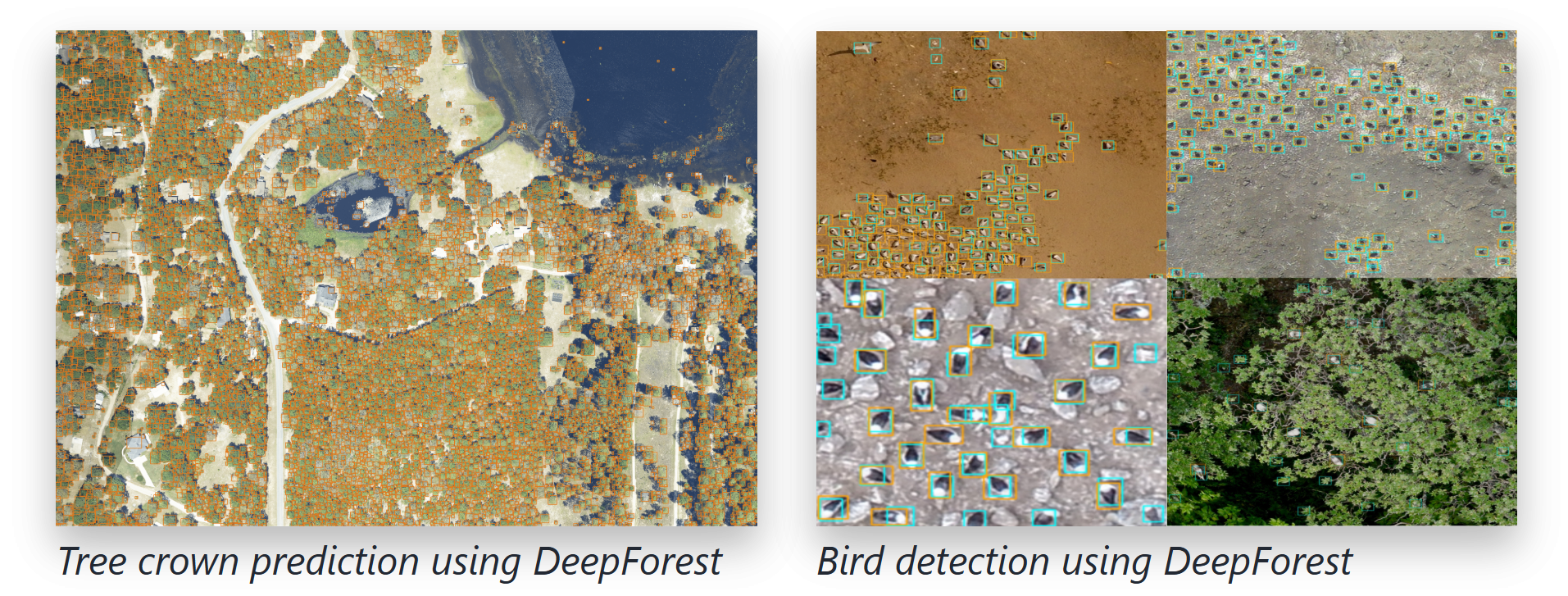

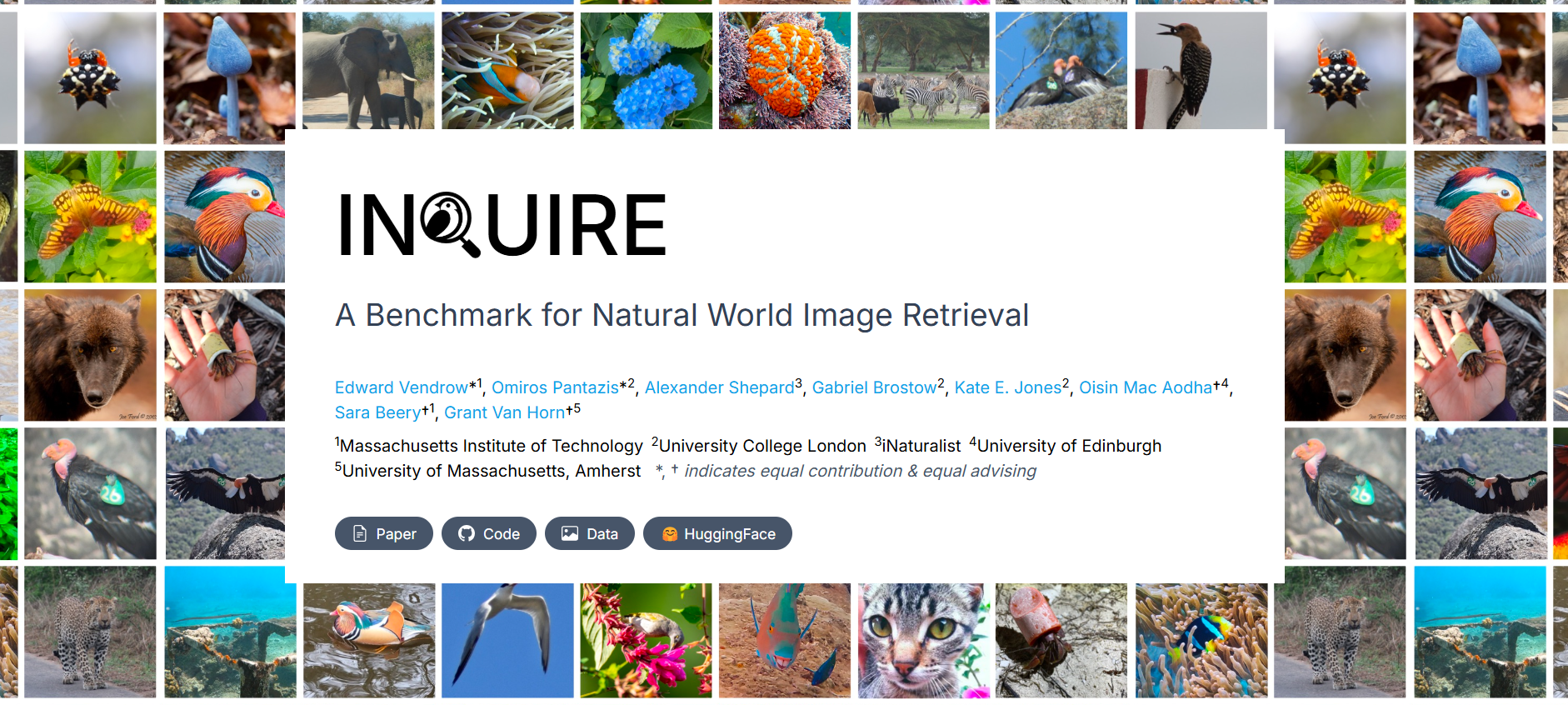

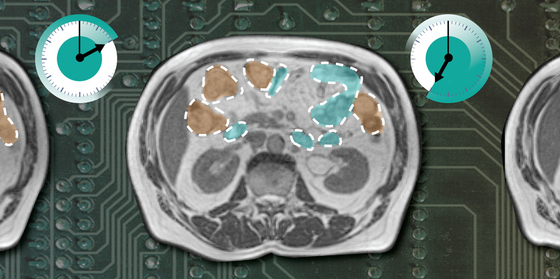

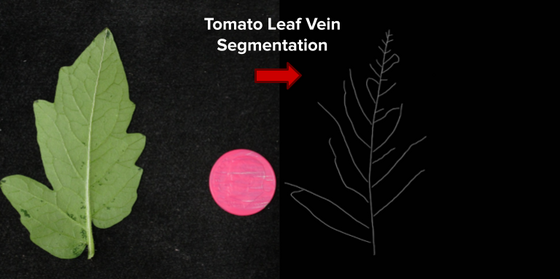

- 🛠 Toolbox — Find models, datasets, libraries, and compute resources for your next project

- 🖊️ Stories — Explore blogs, talks, and real-world applications of ML/AI

- 🚀 Projects — Tackle ML/AI challenges from labs, hackathons, and ML+X partners

Disclaimer: The crowdsourced resources on this website are not endorsed by the UW-Madison and have not been vetted by the Division of Information Technology.

Explore resources

To narrow down your search, select one of the general category groupings from the left sidebar. Alternatively, filter by one of the category tags on the right sidebar. Visit the Category glossary if you are unsure about the meaning of any of these tags.